Reference Links in Retrieval Q&A

In chatbots and Q&A systems, many times reference links are provided in the response, along with an answer, to help point users to documentation or pages that contain more information or the source for the answer. EXAMPLE: Q&A from Arize-Phoenix Documentation QUESTION: What other models does Arize Phoenix support beyond OpenAI for running Evals? ANSWER: Phoenix does support a large set of LLM models through the model object. Phoenix supports OpenAI (GPT-4, GPT-4-32k, GPT-3.5 Turbo, GPT-3.5 Instruct, etc…), Azure OpenAI, Google Palm2 Text Bison, and All AWS Bedrock models (Claude, Mistral, etc…). REFERENCE LINK: https://arize.com/docs/phoenix/sdk-api-reference/python/arize-phoenix-evals This Eval checks the reference link returned answers the question asked in a conversationWe are continually iterating our templates, view the most up-to-date template on GitHub.

How to run the Citation Eval

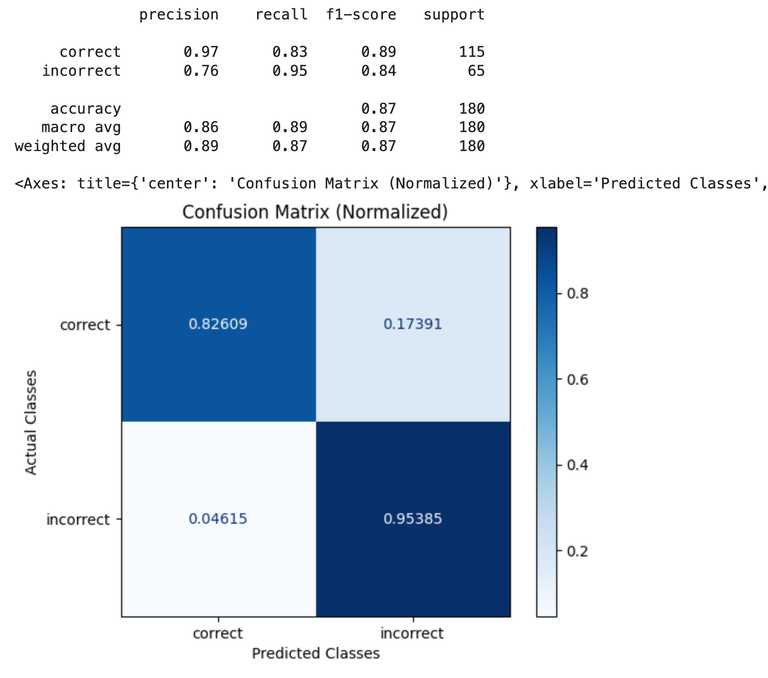

Benchmark Results

This benchmark was obtained using notebook below. It was run using a handcrafted ground truth dataset consisting of questions on the Arize platform. That dataset is available here. Each example in the dataset was evaluating using theREF_LINK_EVAL_PROMPT_TEMPLATE_STR above, then the resulting labels were compared against the ground truth label in the benchmark dataset to generate the confusion matrices below.

Google Colab

colab.research.google.com

| Reference Link Evals | GPT-4o |

|---|---|

| Precision | 0.96 |

| Recall | 0.79 |

| F1 | 0.87 |