Releases · Arize-ai/phoenix

GitHub

09.29.2025

09.29.2025: Day 0 support for Claude Sonnet 4.5 ⚡

Available in Phoenix 12.1+09.27.2025

09.27.2025: Dataset Splits 📊

Available in Phoenix 12.0+Add support for custom dataset splits to organize examples by category.09.26.2025

09.26.2025: Session Annotations 🗂️

Available in Phoenix 12.0+

09.25.2025

09.25.2025: Repetitions 🔁

Available in Phoenix 11.38+09.24.2025

09.24.2025: Custom HTTP headers for requests in Playground 🛠️

Available in Phoenix 11.36+

09.23.2025

09.23.2025: Repetitions in experiment compare slideover 🔄

Available in Phoenix 11.36+09.22.2025

09.22.2025: Helm configurable image registry & IPv6 support 🌐

Available in Phoenix 11.35+09.17.2025

09.17.2025: Experiment compare details slideover in list view 🔍

Available in Phoenix 11.34+09.15.2025

09.15.2025: Prompt Labels 🏷️

Available in Phoenix 11.33+09.12.2025

09.12.2025: Enable Paging in Experiment Compare Details 📄

Available in Phoenix 11.33+J / K). Pagination09.08.2025

09.08.2025: Experiment Annotation Popover in Detail View 🔍

Available in Phoenix 11.33+

09.04.2025

09.04.2025: Experiment Lists Page Frontend Enhancements 💻

Available in Phoenix 11.32+09.03.2025

09.03.2025: Add Methods to Log Document Annotations 📜

Available in Phoenix 11.31+Added client-side support for logging document annotations with a newlog_document_annotations(...) method, supporting both sync and async API calls.08.28.2025

08.28.2025: New arize-phoenix-client Package 📦

arize-phoenix-client is a lightweight, fully-featured package for interacting with Phoenix. It lets you manage datasets, experiments, prompts, spans, annotations, and projects - without needing a local Phoenix installation. 08.22.2025

08.22.2025: New Trace Timeline View 🔭

Available in Phoenix 11.26+08.20.2025

08.20.2025: New Experiment and Annotation Quick Filters 🏎️

Available in Phoenix 11.25+08.15.2025

08.14.2025

08.14.2025: Trace Transfer for Long-Term Storage 📦

Available in Phoenix 11.23+08.12.2025

08.12.2025: UI Design Overhauls 🎨

Available in Phoenix 11.22+08.09.2025

08.07.2025

08.07.2025: Improved Error Handling in Prompt Playground ⚠️

Available in Phoenix 11.20+

08.06.2025

08.06.2025: Expanded Search Capabilities 🔍

Available in Phoenix 11.19+08.05.2025

08.05.2025: Claude Opus 4-1 Support 🤖

Available in Phoenix 11.19+08.04.2025

08.04.2025: Manual Project Creation & Trace Duplication 📂

Available in Phoenix 11.19+08.03.2025

08.03.2025: Delete Spans via REST API 🧹

Available in Phoenix 11.18+You can now delete spans using the REST API, enabling efficient data redaction and giving teams greater control over trace data.07.29.2025

07.29.2025: Google GenAI Evals 🌐

phoenix-evals: Added support for Google’s Gemini models via the Google GenAI SDK — multimodal, async, and ready to scale. Huge shoutout to Siddharth Sahu for this contribution!07.25.2025

07.25.2025: Project Dashboards 📈

Available in Phoenix 11.12+

07.25.2025

07.25.2025: Average Metrics in Experiment Comparison Table 📊

Available in Phoenix 11.12+07.21.2025

07.21.2025: Project and Trace Management via GraphQL 📤

Available in Phoenix 11.9+Create new projects and transfer traces between them via GraphQL, with full preservation of annotations and cost data.07.18.2025

07.18.2025: OpenInference Java ✨

OpenInference Java now offers full OpenTelemetry-compatible tracing for AI apps, including auto-instrumentation for LangChain4j and semantic conventions.07.13.2025

07.13.2025: Experiments Module in phoenix-client 🧪

Available in Phoenix 11.7+New experiments feature set in phoenix-client, enabling sync and async execution with task runs, evaluations, rate limiting, and progress reporting.07.09.2025

07.09.2025: Baseline for Experiment Comparisons 🔁

Available in Phoenix 11.6+07.07.2025

07.07.2025: Database Disk Usage Monitor 🛑

Available in Phoenix 11.5+Monitor database disk usage, notify admins when nearing capacity, and automatically block writes when critical thresholds are reached.07.03.2025

07.03.2025: Cost Summaries in Trace Headers 💸

Available in Phoenix 11.4+07.02.2025

07.02.2025: Cursor MCP Button ⚡️

Available in Phoenix 11.3+@arizeai/phoenix-mcp@2.2.0 also includes a new tool called phoenix-support, letting agents like Cursor auto-instrument your apps using Phoenix and OpenInference best practices.06.25.2025

06.25.2025: Cost Tracking 💰

Available in Phoenix 11.0+06.25.2025

06.25.2025: New Phoenix Cloud ☁️

06.25.2025

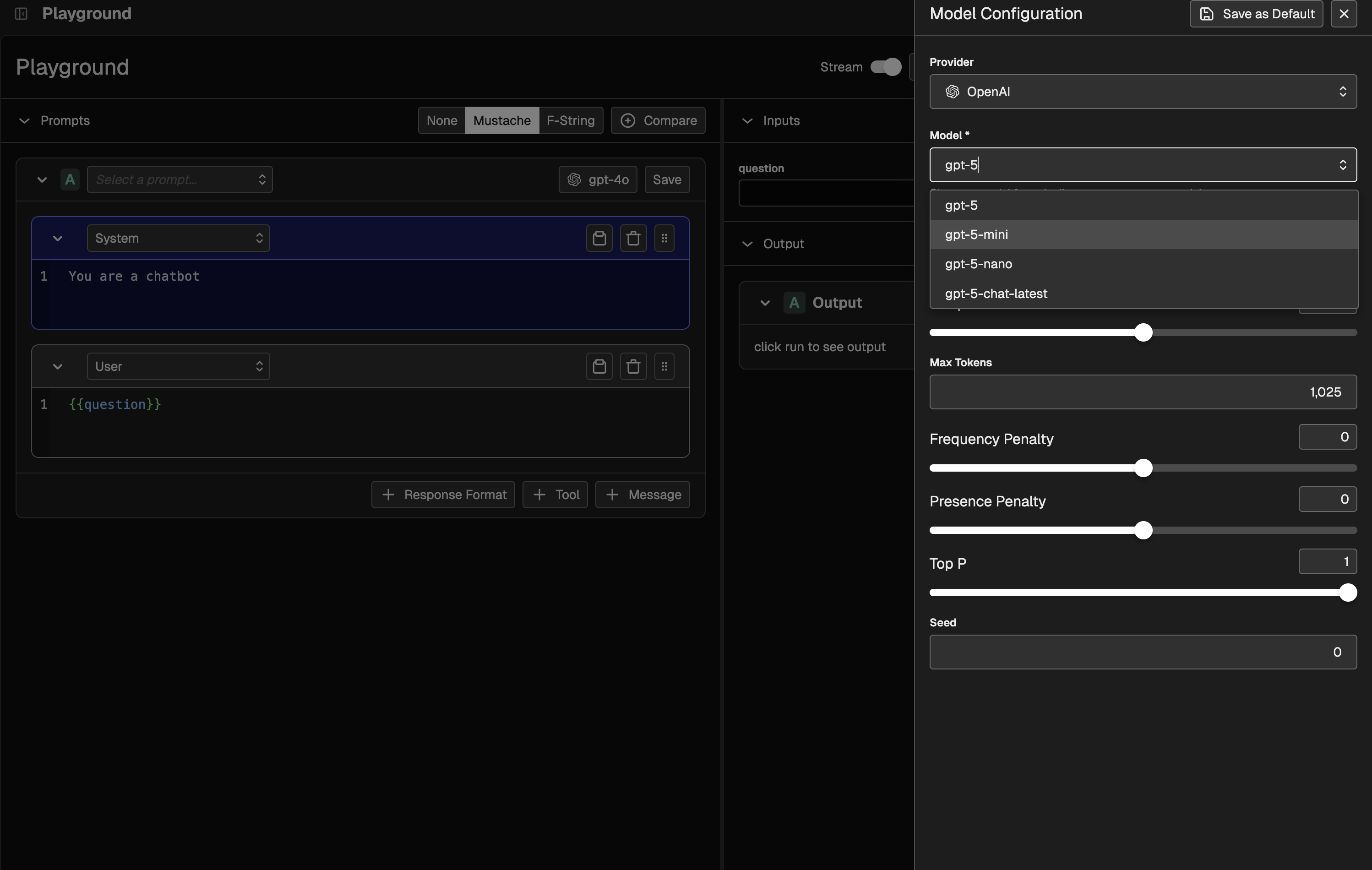

06.25.2025: Amazon Bedrock Support in Playground 🛝

Available in Phoenix 10.15+06.13.2025

06.13.2025: Session Filtering 🪄

Available in Phoenix 10.12+session_id across the API and UI, making it easier to pinpoint and inspect specific sessions.06.13.2025

06.13.2025: Enhanced Span Creation and Logging 🪐

Available in Phoenix 10.12+Now you can create spans directly via a new POST API and client methods, with helpers to safely regenerate IDs and prevent conflicts on insertion.06.12.2025

06.12.2025: Dataset Filtering 🔍

Available in Phoenix 10.11+06.06.2025

06.06.2025: Experiment Progress Graph 📊

Available in Phoenix 10.9+06.04.2025

06.04-2025: Ollama Support in Playground 🛝

06.03.2025

06.03.2025: Deploy Phoenix via Helm ☸️

Available in Phoenix 10.6+05.30.2025

05.30.2025: xAI and Deepseek Support in Playground 🛝

Available in Phoenix 10.7+05.20.2025

05.20.2025: Datasets and Experiment Evaluations in the JS Client 🧪

- getExperiment - allows you to retrieve an Experiment to view its results, and run evaluations on it

- evaluateExperiment - allows you to evaluate previously run Experiments using LLM as a Judge or Code-based evaluators

- createDataset - allows you to create Datasets in Phoenix using the client

- appendDatasetExamples - allows you to append additional examples to a Dataset

05.14.2025

05.14.2025: Experiments in the JS Client 🔬

Experiments CLI output

- Native tracing of tasks and evaluators

- Async concurrency queues

- Support for any evaluator (including bring your own evals)

05.09.2025

05.09.2025: Annotations, Data Retention Policies, Hotkeys 📓

Available in Phoenix 9.0+Annotation Improvements

- A host of improvements to Annotations, including one-to-many support, API access, annotation configs, and custom metadata

- Customizable data retention policies

- Hotkeys! 🔥

05.05.2025

05.05.2025: OpenInference Google GenAI Instrumentation 🧩

04.30.2025

04.30.2025: Span Querying & Data Extraction for PX Client 📊

Available in Phoenix 8.30+SpanQuery DSL for more advanced span querying. Additionally, a get_spans_dataframe method has been added to facilitate easier data extraction for span-related information.04.28.2025

04.28.2025: TLS Support for Phoenix Server 🔐

Available in Phoenix 8.29+Phoenix now supports Transport Layer Security (TLS) for both HTTP and gRPC connections, enabling encrypted communication and optional mutual TLS (mTLS) authentication. This enhancement provides a more secure foundation for production deployments.04.28.2025

04.28.2025: Improved Shutdown Handling 🛑

Available in Phoenix 8.28+When stopping the Phoenix server viaCtrl+C, the shutdown process now exits cleanly with code 0 to reflect intentional termination. Previously, this would trigger a traceback with KeyboardInterrupt, misleadingly indicating a failure.04.25.2025

04.25.2025: Scroll Selected Span Into View 🖱️

Available in Phoenix 8.27+04.18.2025

04.18.2025: Tracing for MCP Client-Server Applications 🔌

Available in Phoenix 8.26+openinference-instrumentation-mcp, a new package in the OpenInference OSS library that enables seamless OpenTelemetry context propagation across MCP clients and servers. It automatically creates spans, injects and extracts context, and connects the full trace across services to give you complete visibility into your MCP-based AI systems.Big thanks to Adrian Cole and Anuraag Agrawal for their contributions to this feature.04.16.2025

04.16.2025: API Key Generation via API 🔐

Available in Phoenix 8.26+Phoenix now supports programmatic API key creation through a new endpoint, making it easier to automate project setup and trace logging. To enable this, set thePHOENIX_ADMIN_SECRET environment variable in your deployment.04.15.2025

04.15.2025: Display Tool Call and Result IDs in Span Details

Available in Phoenix 8.25+04.09.2025

04.09.2025: Project Management API Enhancements ✨

Available in Phoenix 8.24+This update enhances the Project Management API with more flexible project identification We’ve added support for identifying projects by both ID and hex-encoded name and introduced a new_get_project_by_identifier helper function.04.09.2025

04.09.2025: New REST API for Projects with RBAC 📽️

Available in Phoenix 8.23+04.03.2025

04.03.2025: Phoenix Client Prompt Tagging 🏷️

Available in Phoenix 8.22+04.02.2025

04.02.2025 Improved Span Annotation Editor ✍️

Available in Phoenix 8.21+04.01.2025

04.01.2025: Support for MCP Span Tool Info in OpenAI Agents SDK 🔨

Available in Phoenix 8.20+Newly added to the OpenAI Agent SDK is support for MCP Span Info, allowing for the tracing and extraction of useful information about MCP tool listings. Use the Phoenix OpenAI Agents SDK for powerful agent tracing.03.27.2025

03.27.2025 Span View Improvements 👀

Available in Phoenix 8.20+03.24.2025

03.24.2025: Tracing Configuration Tab 🖌️

Available in Phoenix 8.19+03.21.2025

03.21.2025: Environmental Variable Based Admin User Configuration 🗝️

Available in Phoenix 8.17+You can now preconfigure admin users at startup using an environment variable, making it easier to manage access during deployment. Admins defined this way are automatically seeded into the database and ready to log in.03.20.2025

03.20.2025: Delete Experiment from Action Menu 🗑️

Available in Phoenix 8.16+03.19.2025

03.19.2025: Access to New Integrations in Projects 🔌

Available in Phoenix 8.15+03.18.2025

03.18.2025: Resize Span, Trace, and Session Tables 🔀

Available in Phoenix 8.14+03.14.2025

03.14.2025: OpenAI Agents Instrumentation 📡

Available in Phoenix 8.13+03.07.2025

03.07.2025: Model Config Enhancements for Prompts 💡

Available in Phoenix 8.11+03.07.2025

03.07.2025: New Prompt Playground, Evals, and Integration Support 🦾

Available in Phoenix 8.9+03.06.2025

03.06.2025: Project Improvements 📽️

Available in Phoenix 8.8+02.19.2025

02.19.2025: Prompts 📃

Available in Phoenix 8.0+- Versioning & Iteration: Seamlessly manage prompt versions in both Phoenix and your codebase.

- New TypeScript Client: Sync prompts with your JavaScript runtime, now with native support for OpenAI, Anthropic, and the Vercel AI SDK.

- New Python Client: Sync templates and apply them to AI SDKs like OpenAI, Anthropic, and more.

- Standardized Prompt Handling: Native normalization for OpenAI, Anthropic, Azure OpenAI, and Google AI Studio.

- Enhanced Metadata Propagation: Track prompt metadata on Playground spans and experiment metadata in dataset runs.

02.18.2025

02.18.2025: One-Line Instrumentation⚡️

Available in Phoenix 8.0+register(auto_instrument=True), you can enable automatic instrumentation in your application, which will set up instrumentors based on your installed packages.01.18.2025

01.18.2025: Automatic & Manual Span Tracing ⚙️

Available in Phoenix 7.9+@tracer.chain and using the tracer in a with clause.Check out the docs for more on how to use tracer objects.12.09.2024

12.09.2024: Sessions 💬

Available in Phoenix 7.0+11.18.2024

11.18.2024: Prompt Playground 🛝

Available in Phoenix 6.0+- Replay individual spans with modified prompts, or run full Datasets through your variations.

- Easily test different models, prompts, tools, and output formats side-by-side, directly in the platform.

- Automatically capture traces as Experiment runs for later debugging. See here for more information on Prompt Playground, or jump into the platform to try it out for yourself.

09.26.2024

09.26.2024: Authentication & RBAC 🔐

Available in Phoenix 5.0+07.18.2024

07.18.2024: Guardrails AI Integrations💂

Available in Phoenix 4.11.0+07.11.2024

07.11.2024: Hosted Phoenix and LlamaTrace 💻

Phoenix is now available for deployment as a fully hosted service.07.03.2024

07.03.2024: Datasets & Experiments 🧪

Available in Phoenix 4.6+07.02.2024