Google Colab

colab.research.google.com

- Learn how to craft prompts, tools, and evaluators in Phoenix

- Refine your prompts to understand the power of ReAct prompting

- Leverage Phoenix and LLM as a Judge techniques to evaluate accuracy at each step, gaining insight into the model’s thought process.

- Learn how to apply ReAct prompting in real-world scenarios for improved task execution and problem-solving.

Set up Dependencies and Keys

Load Dataset Into Phoenix

This dataset contains 20 customer service questions that a customer might ask a store’s chatbot. As we dive into ReAct prompting, we’ll use these questions to guide the LLM in selecting the appropriate tools. Here, we also import the Phoenix Client, which enables us to create and modify prompts directly within the notebook while seamlessly syncing changes to the Phoenix UI. After running this cell, the dataset should will be under the Datasets tab in Phoenix.Define Tools

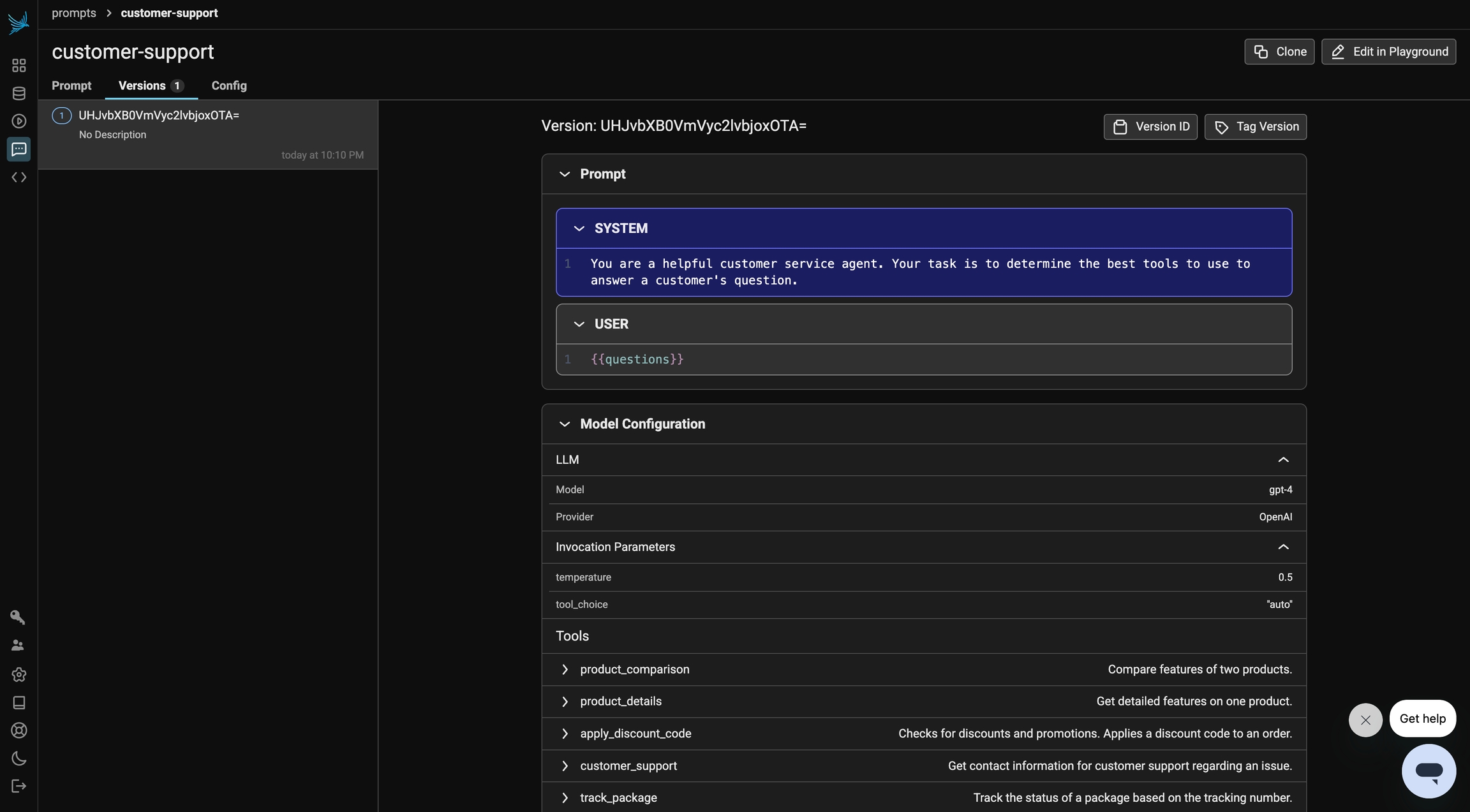

Next, let’s define the tools available for the LLM to use. We have five tools at our disposal, each serving a specific purpose: Product Comparison, Product Details, Discounts, Customer Support, and Track Package. Depending on the customer’s question, the LLM will determine the optimal sequence of tools to use.Initial Prompt

Let’s start by defining a simple prompt that instructs the system to utilize the available tools to answer the questions. The choice of which tools to use, and how to apply them, is left to the model’s discretion based on the context of each customer query.

evaluate_response function, we define our LLM as a Judge evaluator. Finally, we run our experiment.

Experiment

ReAct Prompt

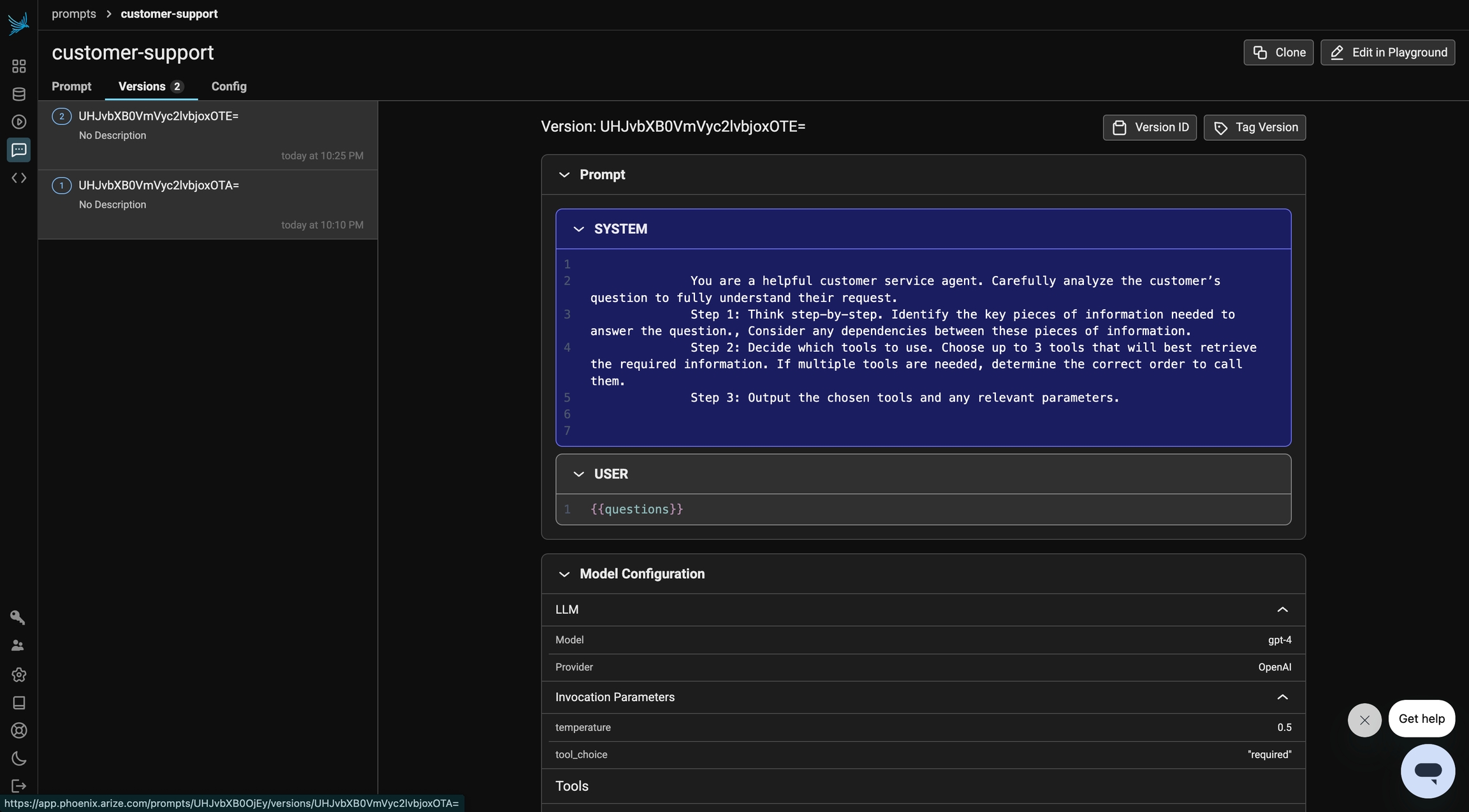

Next, we iterate on our system prompt using ReAct Prompting techniques. We emphasize that the model should think through the problem step-by-step, break it down logically, and then determine which tools to use and in what order. The model is instructed to output the relevant tools along with their corresponding parameters. This approach differs from our initial prompt because it encourages reasoning before action, guiding the model to select the best tools and parameters based on the specific context of the query, rather than simply using predefined actions.

Experiment