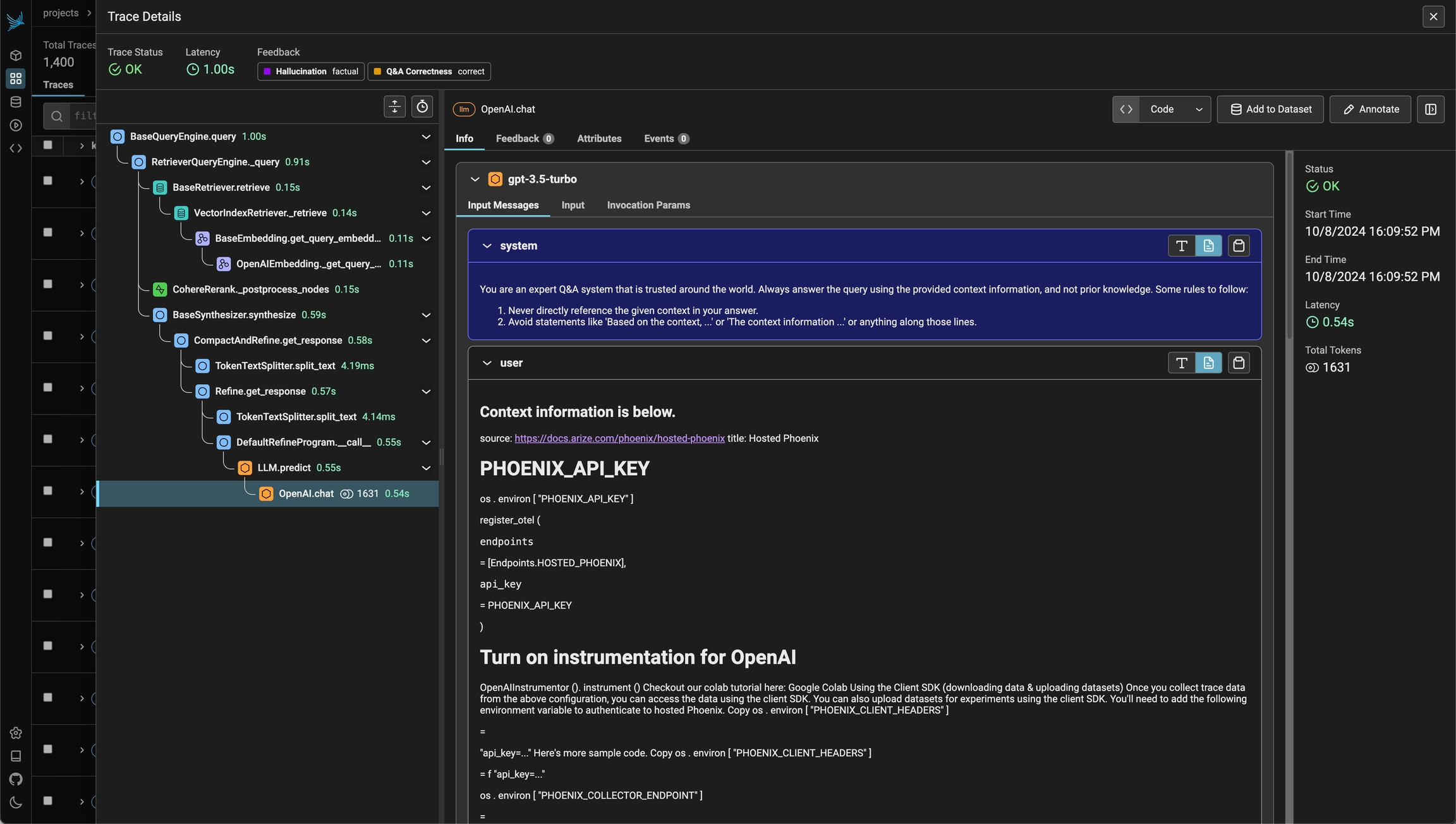

View the inner workings for your LLM Application

- Application Latency: Identify and address slow invocations of LLMs, Retrievers, and other components within your application, enabling you to optimize performance and responsiveness.

- Token Usage: Gain a detailed breakdown of token usage for your LLM calls, allowing you to identify and optimize the most expensive LLM invocations.

- Runtime Exceptions: Capture and inspect critical runtime exceptions, such as rate-limiting events, that can help you proactively address and mitigate potential issues.

- Retrieved Documents: Inspect the documents retrieved during a Retriever call, including the score and order in which they were returned to provide insight into the retrieval process.

- Embeddings: Examine the embedding text used for retrieval and the underlying embedding model to allow you to validate and refine your embedding strategies.

- LLM Parameters: Inspect the parameters used when calling an LLM, such as temperature and system prompts, to ensure optimal configuration and debugging.

- Prompt Templates: Understand the prompt templates used during the prompting step and the variables that were applied, allowing you to fine-tune and improve your prompting strategies.

- Tool Descriptions: View the descriptions and function signatures of the tools your LLM has been given access to in order to better understand and control your LLM’s capabilities.

- LLM Function Calls: For LLMs with function call capabilities (e.g., OpenAI), you can inspect the function selection and function messages in the input to the LLM, further improving your ability to debug and optimize your application.

Features

Below are some of the main capabilities:Next steps

- To get started, check out the Quickstart guide.

- Read more about What are Traces and How Tracing Works.

- Check out the How-To Guides for specific tutorials.