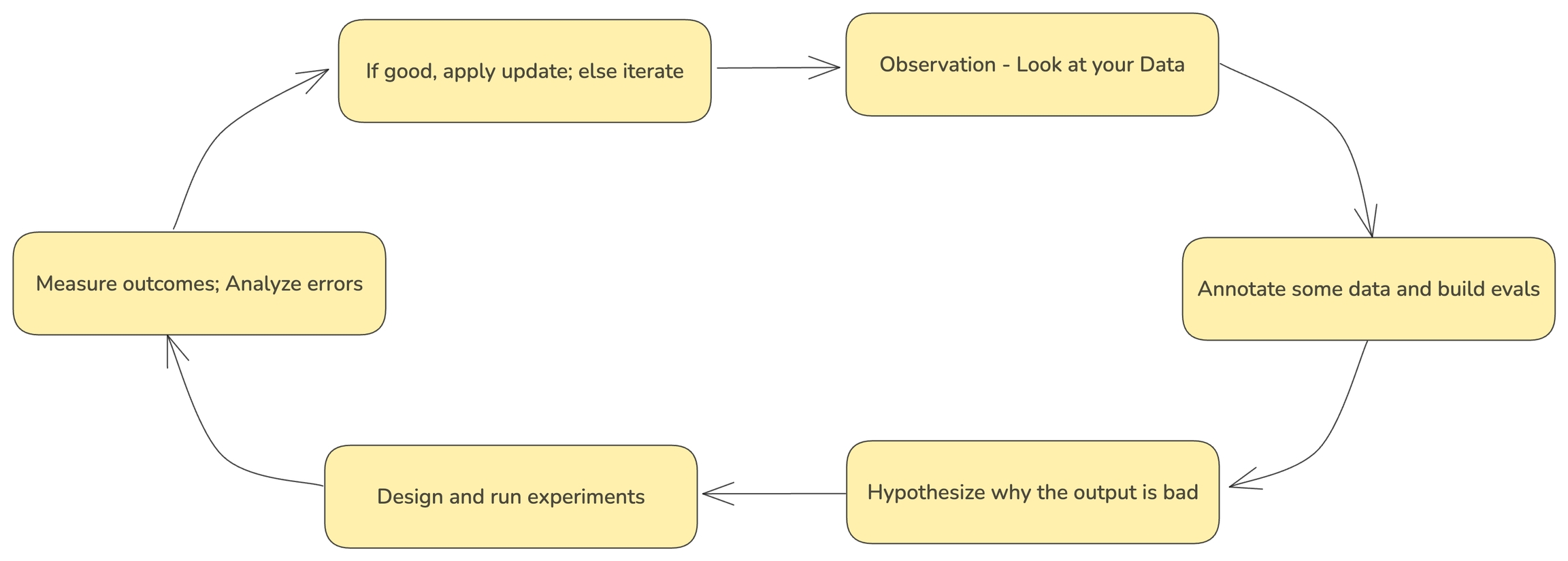

Applying the scientific method to building AI products - By Eugene Yan

- Track performance over time

- Identify areas for improvement

- Compare different model versions or prompts

- Gather data for fine-tuning or retraining

- Provide stakeholders with concrete metrics on system effectiveness

Guides

- To learn how to configure annotations and to annotate through the UI, see Annotating in the UI

- To learn how to add human labels to your traces, either manually or programmatically, see Annotating via the Client

- To learn how to evaluate traces captured in Phoenix, see Running Evals on Traces

- To learn how to upload your own evaluation labels into Phoenix, see Log Evaluation Results

Adding manual annotations to traces