Credentials

To securely provide your API keys, you have two options. One is to store them in your browser in local storage. Alternatively, you can set them as environment variables on the server side. If both are set at the same time, the credential set in the browser will take precedence.Option 1: Store API Keys in the Browser

API keys can be entered in the playground application via the API Keys dropdown menu. This option stores API keys in the browser. Simply navigate to to settings and set your API keys.

Option 2: Set Environment Variables on Server Side

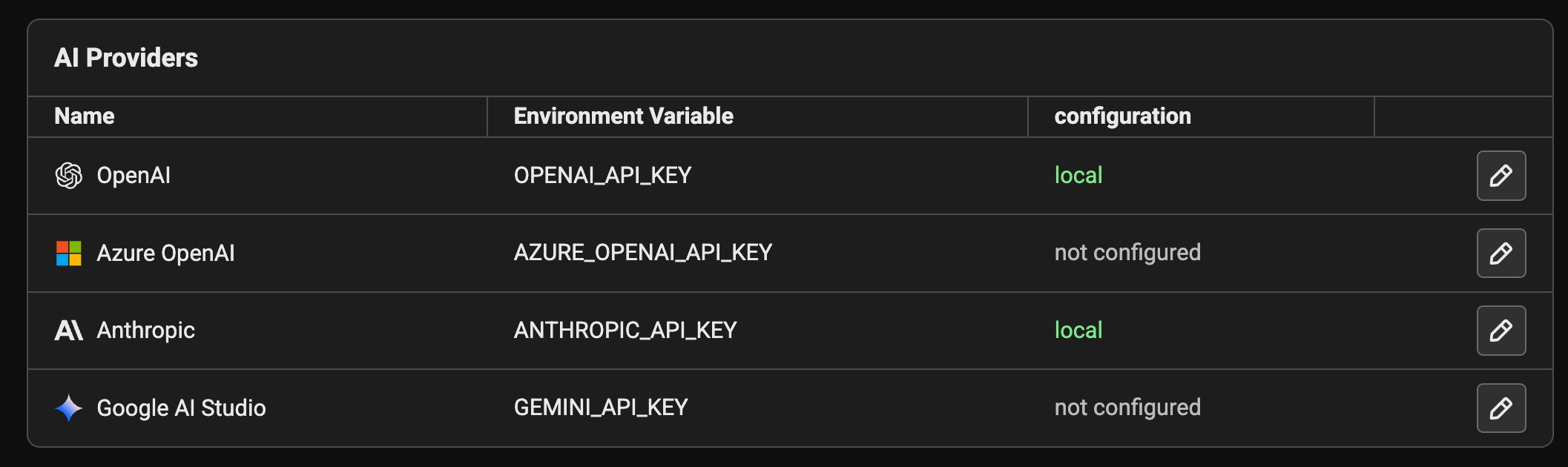

Available on self-hosted Phoenix If the following variables are set in the server environment, they’ll be used at API invocation time.| Provider | Environment Variable | Platform Link |

|---|---|---|

| OpenAI | - OPENAI_API_KEY | https://platform.openai.com/ |

| Azure OpenAI | - AZURE_OPENAI_API_KEY - AZURE_OPENAI_ENDPOINT - OPENAI_API_VERSION | https://azure.microsoft.com/en-us/products/ai-services/openai-service/ |

| Anthropic | - ANTHROPIC_API_KEY | https://console.anthropic.com/ |

| Gemini | - GEMINI_API_KEY or GOOGLE_API_KEY | https://aistudio.google.com/ |

For Azure, you can also set the following server-side environment variables:

AZURE_TENANT_ID, AZURE_CLIENT_ID, and AZURE_FEDERATED_TOKEN_FILE to use WorkloadIdentityCredential.Using OpenAI Compatible LLMs

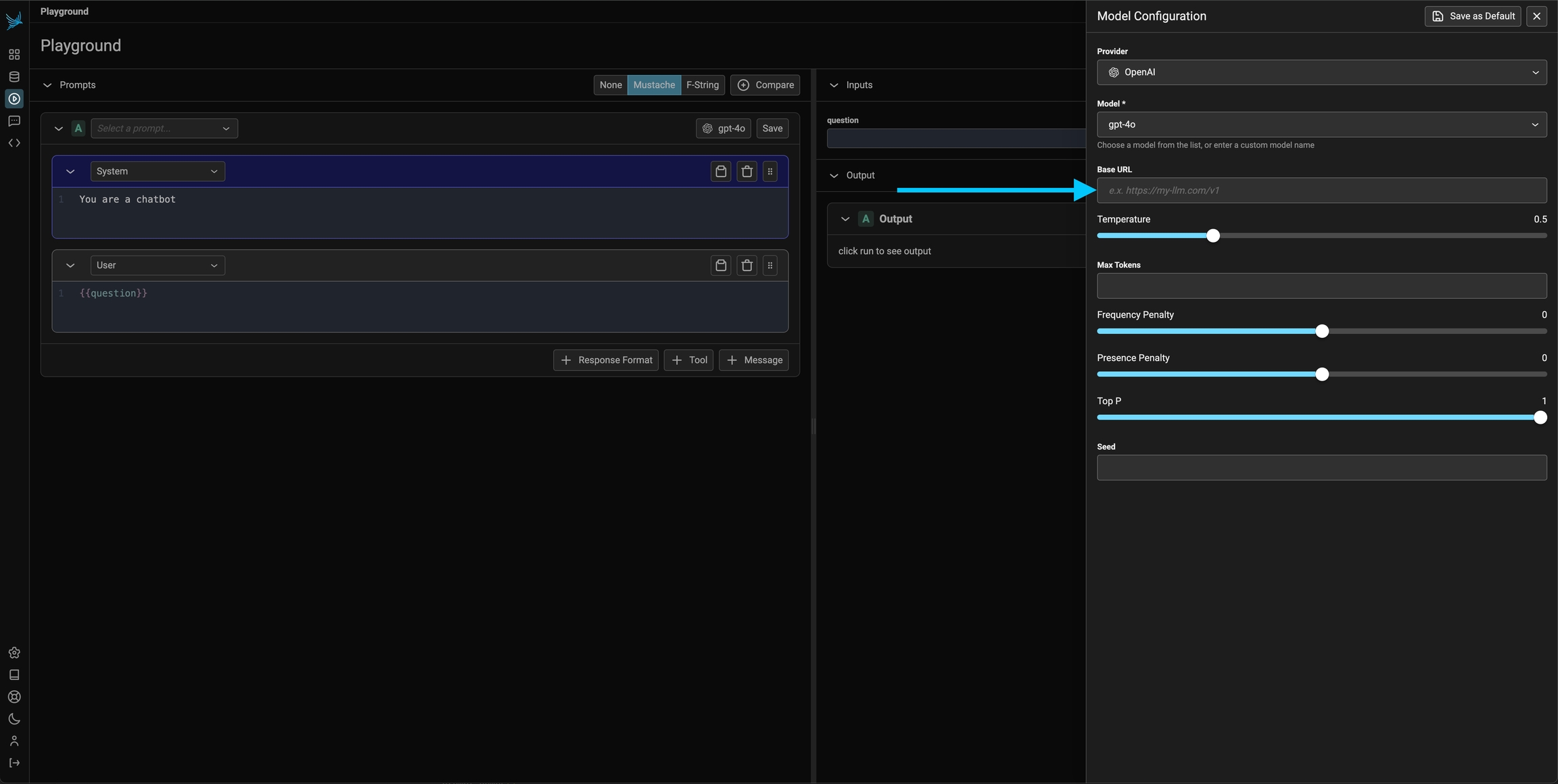

Option 1: Configure the base URL in the prompt playground

Since you can configure the base URL for the OpenAI client, you can use the prompt playground with a variety of OpenAI Client compatible LLMs such as Ollama, DeepSeek, and more.\

Simply insert the URL for the OpenAI client compatible LLM provider

If you are using an LLM provider, you will have to set the OpenAI api key to that provider’s api key for it to work.

Option 2: Server side configuration of the OpenAI base URL

Optionally, the server can be configured with theOPENAI_BASE_URL environment variable to change target any OpenAI compatible REST API.

Custom Headers

Phoenix supports adding custom HTTP headers to requests sent to AI providers. This is useful for additional credentials, routing needs, or cost tracking when using custom LLM proxies.Configuring Custom Headers

- Click on the model configuration button in the playground

- Scroll down to the “Custom Headers” section

- Add your headers in JSON format: